Whether you’re working on VR, 3D printing, or innovative research, capturing real-world objects in three dimensions is an increasingly common need. There are a lot of technologies to aid in this process. When people think of 3D capture, the first thing the often comes to mind is a laser scanner – a laser beam that moves across an object capturing data about the surface. They look very “hollywood” and impressive.

Another common type of capture is structured-light capture. In structured light 3d capture, different patterns are projected onto an object (using something like a traditional computer projector). A camera then looks at how the patterns are stretched or blocked, and calculates the shape of the surface from there. This is also how the Microsoft Kinect works, though it uses invisible (infrared) patterns.

Both of these approaches can deliver high precision results, and have some particular use cases. But they require specialized equipment, and often specialized facilities. There’s another technology that’s much more accessible: photogrammetry.

In very simple terms, photogrammetry involves taking many photos of an object, from different sides and angles. Software then uses those photos to reconstruct a 3d representation, including the surface texture. The term photogrammetry actually encapsulates a wide variety of processes and outputs, but in the 3d space, we’re specifically interested in stereophotogrammetry. This process involves finding the overlap between photos. From there, the original camera positions can be calculated. Once you know the camera positions, you can use triangulation to calculate the position in space of any given point.

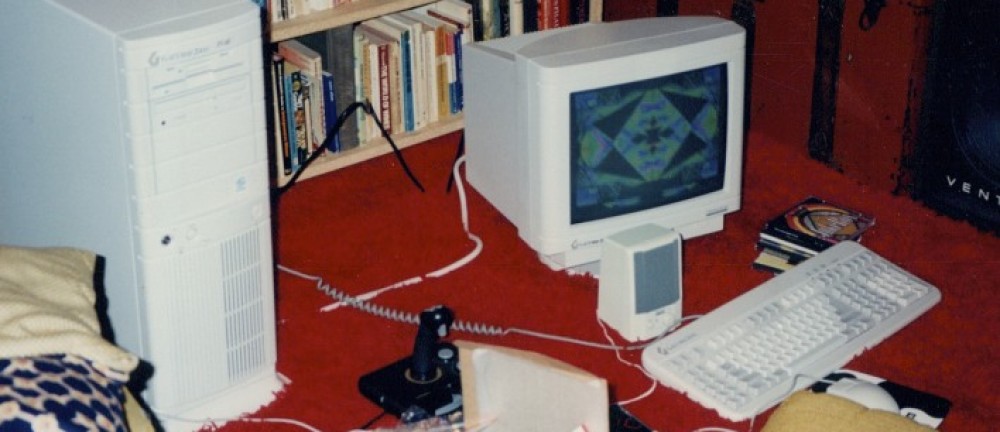

The process itself is very compute-intensive, so it needs a powerful computer (or a patient user). Photogrammetry benefits from very powerful graphics cards, so it’s currently best suited to use on customized PCs.

One of the most exciting parts of photogrammetry is that it doesn’t work only with photos taken in a controlled lighting situation. You can experiment with performing photogrammetry using a mobile device and the 123d Catch application. Photogrammetry can even be performed using still frames extracted from video – walking around an object capturing video for example.

For users looking to get better results, we’re going to be writing some guides on optimizing the process. A good quality digital camera with a tripod, and some basic lighting equipment can dramatically improve the results.

Because it uses simple images, photogrammetry is also well suited to covering 3D data from imaging platforms like drones or satellites.

Photogrammetry Software

There are a handful of popular tools for photogrammetry. One of the oldest and most established tools is PhotoScan from AgiSoft. PhotoScan is a “power user” tool, which allows for many custom interventions to optimize the photogrammetry process. It can be pretty intimidating for new users, but in the hands of the right user it’s very powerful.

An easier (and still very powerful) alternative is Autodesk Remake. Remake doesn’t expose the same level of control that PhotoScan has, but in many cases it can deliver a stellar result without any tweaking. It also has sophisticated tools for touching up 3d objects after the conversion process. An additional benefit is that it has the ability to output models for a variety of popular 3d scanners. Remake is free for educational uses as well.

There are also photogrammetry tools for specialized used cases. We’ve been experimenting with Pix4D, which is a photogrammetry toolset designed specifically for drone imaging. Because Pix4D knows about different models of drones, it can automatically correct for camera distortion and the types of drift that are common with drones. Pix4D also has special drone control software, which can handle the capture side, ensuring that the right number of photos are captured, with the right amount of overlap.